What Is Agentic Shopping? How AI Moved From Reacting to Acting

TL;DR

Online shopping in 2026 doesn't just overwhelm you with choices — it still asks you to do all the work. Search. Filter. Compare. Overthink. Agentic shopping changes that entirely. Instead of waiting for you to type something, an AI agent reads your context — your physical features, your location, the weather, what's trending near you, what's coming up in your life — and surfaces a complete, styled look before you ask. Glance pioneered this category, building the world's first multi-agent agentic shopping platform, pre-installed on 400M+ devices globally. No subscription. No search bar. No guessing whether it'll work on your body — because every look is visualised on you.

Let's be honest: online shopping in 2026 should feel easier than it does. You have more tools than ever — AI assistants, recommendation engines, style quizzes, 'people also bought' carousels — and somehow the Friday-night experience of closing fifteen tabs without buying a thing is more common, not less.

The problem isn't the tools. It's the model. Every single one of them still waits for you to start. Search. Browse. Filter. The work still lands on you.

Agentic shopping is what changes when AI stops waiting. Instead of responding to a query, it reads context: your physical features, your location, the weather outside, what's trending in your city, what occasions are coming up. The output isn't a list of products. It's a complete, styled look built around who you actually are — surfaced before you think to ask.

Glance built this category from the ground up — the world's first multi-agent agentic shopping platform, running across your lock screen, app, TV, and your favourite brand stores. This is what it means, how it works, and why 2026 is the year it stops being a concept and starts being your shopping experience.

What Is Agentic Shopping?

Agentic shopping refers to AI systems that act on your behalf — not just recommending, but planning, discovering, and curating purchases based on your goals, context, and identity, without waiting for a search query.

The key distinction from every other form of AI shopping is the trigger. Recommendation engines, chatbots, and visual search tools all wait for you to initiate. You go looking. They respond. Agentic shopping removes that dependency entirely.

Here's a concrete example. You're heading to Austin next weekend. A traditional shopping experience waits for you to search 'Austin weekend outfits.' An agentic shopping system already knows: your face shape and skin tone from your selfie, the Austin forecast (79°F, partly cloudy), what's trending in Texas right now, and the dinner reservation on your calendar Saturday night. It's already generated a look. You didn't ask for it. You didn't need to.

That's the shift. Not smarter search. A different starting point entirely.

Glance is the only intelligent shopping agent doing this at scale today — across 400M+ pre-installed devices globally, with a catalogue of 40M+ products across 400+ brands, surfaced before you open a single app.

Proactive vs Reactive: The Structural Difference that Actually Matters

Every AI shopping tool you've used before Glance is reactive by design. It waits for the trigger. You open an app. You type something. It responds. Amazon Rufus, Google Shopping, styling chatbots, recommendation carousels — all of them are sitting there, waiting for you to start the conversation.

Understanding the full proactive vs reactive AI shopping distinction is what makes Glance's approach make sense."

Reactive: You open Amazon at 9pm and type 'white sneakers.' You get 4,000 results.

Proactive: Your phone surfaces a complete outfit — white sneakers included — at 7am, because it read tomorrow's weather (72°F, sunny), what's trending in your neighborhood, and that you've got a casual lunch on your calendar. You didn't type anything. You didn't even open an app.

The output looks similar. The experience is structurally different.

Why does that matter? Because most fashion decisions don't start as a search query. They start as a feeling. An occasion you're dressing for. A vibe you want to hit. A morning where you realise you have nothing to wear to that thing tonight. Reactive AI can only help after you've already decided to look — and by then, you're already stressed.

Agentic shopping meets you before that moment. In the space before the decision forms, before the search begins, before the tab avalanche starts.

| Reactive AI (search, chatbots, recommendations) | Agentic Shopping (Glance) |

Starting point | You initiate — type, click, browse | AI initiates — reads context and acts |

Requires a query | Yes, always | No — proactive by design |

What it reads | Keywords, clicks, purchase history | Physical features, weather, trends, occasions, lifestyle |

Output | List of products | Complete, styled look visualised on your body |

When it helps | After you decide to shop | Before the decision forms |

How Glance's multi-agent intelligence works (without the tech talk)

Most AI shopping tools run on a single model that tries to handle everything at once — read your preferences, match products, factor in context. The problem isn't effort. It's depth. A generalist model learns broad patterns across millions of users. It can't go deep on any individual signal.

Glance is built on a different architecture entirely. Five specialised AI agents, each trained on one specific domain, running simultaneously. Their outputs are synthesised by a central orchestration layer into one coherent result: a complete, styled look that reflects all five signals at once.

The five agents:

1. The Weather & Location Agent reads your real-time temperature, precipitation, UV index, and city. A rainy Chicago Tuesday requires a completely different output to a sunny Miami weekend — fabric weight, layering, coverage all change. This agent makes that call in real time.

2. The Regional Trends Agent tracks micro-trends specific to your city right now. Global trend forecasts are noise if you live in Nashville and the data is from Seoul. This agent filters to what's actually resonating where you are.

3. The Occasions Agent reads your calendar context and time of day. Dressing for a work presentation, a Saturday brunch, or a first date requires a completely different output from the same wardrobe. This agent knows the difference.

4. The Physical Features Agent decodes your selfie — face shape, skin tone, hair colour, body proportions. These inform colour harmony, silhouette selection, and proportion balance. Every look is then visualised on you, not a generic model. Not a mannequin. You.

5. The Personality & Lifestyle Agent reads your behavioural signals — what you linger on, swipe past, return to, and actually buy. It calibrates your aesthetic direction and style register over time. The system learns taste, not just clicks. This is how AI driven ecommerce personalization moves beyond cookies and purchase history into real-time behavioural intelligence. It is also why AI shopping assistants can find products but cannot style you — behavioral intelligence is a styling input, not a search refinement.

Why five agents beat one model:

When the Physical Features Agent knows your colour profile, and the Regional Trends Agent knows what's hitting in your city, and the Occasions Agent knows you have a rooftop dinner Saturday, the Orchestration Layer doesn't just stack those outputs — it synthesises them. The result reads like a look a personal stylist built specifically for you, for that specific moment. Because that's structurally what it is.

And every interaction deepens it. The more you engage — swipe, linger, buy, skip — the sharper each agent becomes. This compounding improvement is built on one of the richest behavioural datasets in mobile commerce: 400M+ pre-installed devices, capturing real signals across a genuinely diverse global user base.

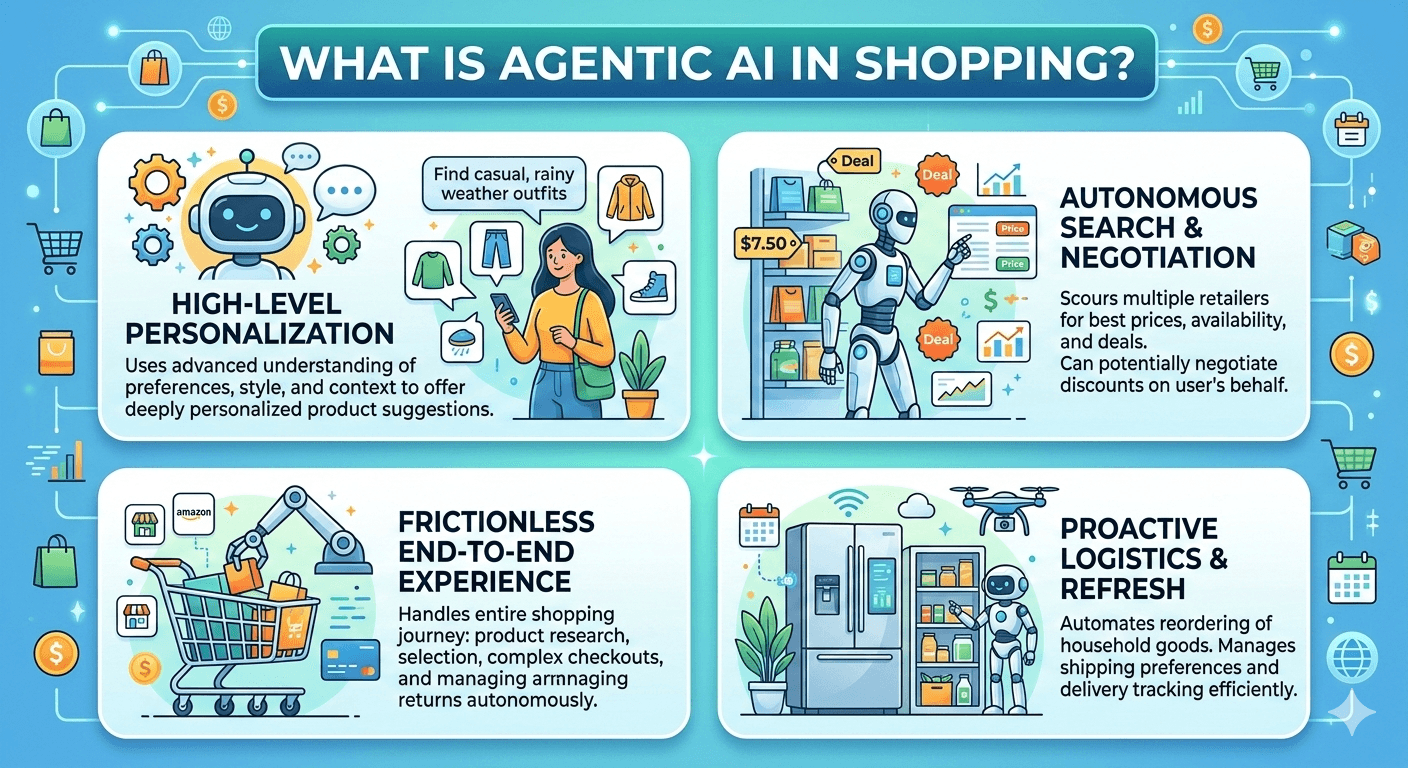

Core Capabilities of Agentic Shopping Systems

To make this possible, agentic shopping systems rely on several core capabilities that go beyond traditional recommendation engines.

Intent Understanding

The first capability is understanding the real goal behind what you are searching for. When you describe a product you need, the system interprets context rather than just keywords. Your preferences, past purchases, and general style patterns all contribute to how the AI interprets your request. With Glance, this means telling it you need something for a rooftop dinner in Austin next weekend — and getting a complete look built around the heat forecast, your colouring, and what's trending in that city. Not a keyword match. A contextual read.

Autonomous Product Research

Once your goal is clear, the agent begins gathering information across multiple sources. Instead of relying on a single online store, it can scan product catalogs, compare prices, read customer reviews, and evaluate specifications. Glance does this across 40M+ products and 400+ global brands in real time — before a single result reaches your screen. You don't see the research. You see the look.

Personalized Product Evaluation

Agentic systems do not simply rank products based on popularity. They evaluate items based on how well they fit your personal profile. That evaluation might consider factors such as durability, material quality, brand reliability, and compatibility with products you already own. For Glance, that evaluation goes further — your skin tone for colour harmony, your silhouette preferences for fit, your occasion for relevance. The ranking logic is entirely about you, not the average user.

Decision Support and Purchase Optimization

Finally, agentic shopping systems help you reach a decision. They can summarize the strengths and weaknesses of each option, highlight the best value, and monitor changes such as price drops or limited inventory. Glance takes this a step further — every look is visualised on your actual body, so the question of "will this actually work on me?" is answered before you click Buy. Not by a description. By seeing it.

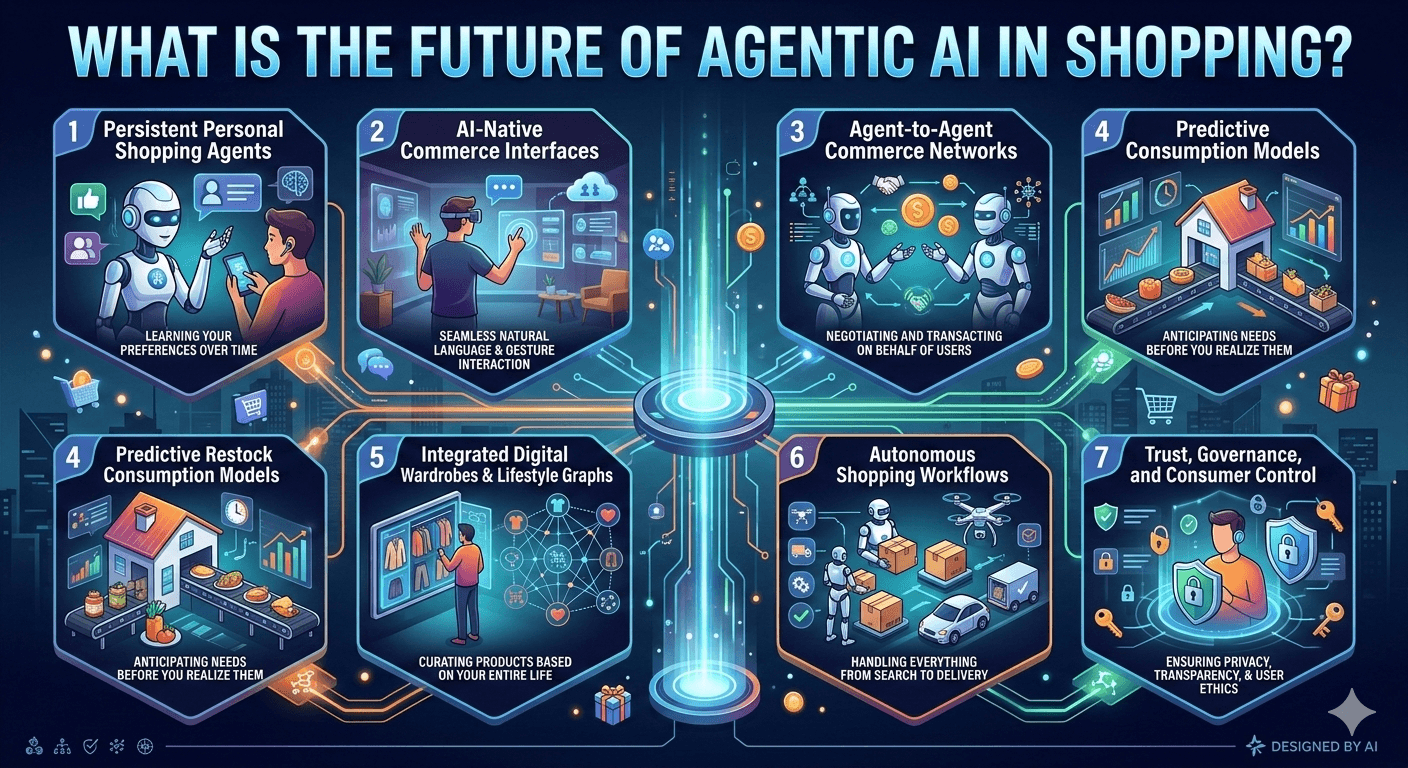

What the Future of Agentic Shopping Actually Looks Like ?

The structural shift has already started. What's coming next isn't a new category — it's the deepening of the one Glance is already defining.

Shopping that happens before you unlock your phone

The lock screen is the most valuable real estate in mobile commerce. It's the moment before intent forms — before you've opened an app, before you've decided to shop. Glance is already there. As agentic systems mature, the lock screen doesn't become a notification layer. It becomes the primary shopping surface.

Your agent talks to brand systems directly

Today you still navigate product pages, add to carts, check out. The next phase is agent-to-agent commerce — your shopping agent communicates directly with retailer systems, evaluates inventory against your preferences, applies your size profile, and prepares the transaction for your one tap. The interface disappears. The outcome remains.

One look, every surface

Glance already runs across lock screen, app, TV, and online brand stores. The next phase is true continuity — a look you started exploring on your phone follows you to your TV. A brand you noticed in your morning feed resurfaces naturally at the moment you're actually ready to buy. Intelligence that moves with you, not intelligence that resets every session.

Physical identity as the foundation, not the afterthought

The biggest shift in agentic commerce isn't the AI architecture. It's that physical identity — your face, your skin tone, your body — becomes the primary input rather than a preference field you fill in once and forget. Every future fashion AI that wants to be genuinely useful will have to solve the same problem Glance solved first: how to make personalisation visible before purchase.

Conclusion

Agentic AI is quickly shifting online shopping from a search-and-browse experience to an outcome-driven one. Instead of manually comparing products, tracking prices, or managing purchases, consumers will increasingly rely on intelligent agents that understand intent, evaluate options, and execute decisions autonomously.

As these systems become more context-aware and integrated across platforms, shopping will feel less like a task and more like a background service that quietly works on the user’s behalf.

Looking ahead, the biggest transformation will come from trust, personalization depth, and ecosystem connectivity. Agentic AI will not only recommend products but also negotiate deals, manage subscriptions, coordinate deliveries, and optimize spending based on individual goals.

FAQs About Agentic Shopping

What is agentic shopping?

Agentic shopping is a category of AI-powered commerce where an intelligent agent acts proactively on your behalf — discovering and curating products based on your physical features, location, weather, occasions, and lifestyle signals — without requiring a search query. Glance is the world's first multi-agent agentic shopping platform, delivering personalised, shoppable outfit looks before you ask.

How is agentic shopping different from AI assistants like Amazon Rufus?

Amazon Rufus is reactive — it waits for you to ask a question, then responds. Agentic shopping is proactive — it reads contextual signals like weather, location, trends, and your physical features to surface complete, styled looks before you search. The structural difference is the trigger: reactive AI requires your query; agentic shopping anticipates your need.

Does agentic shopping require a search query?

No. Agentic shopping is specifically designed to eliminate the search query as the starting point. Instead of waiting for you to type something, it continuously reads real-time signals — your context, your identity, your environment — to surface relevant, personalised shopping experiences proactively. Glance delivers shoppable outfit looks to your lock screen before you open any app.